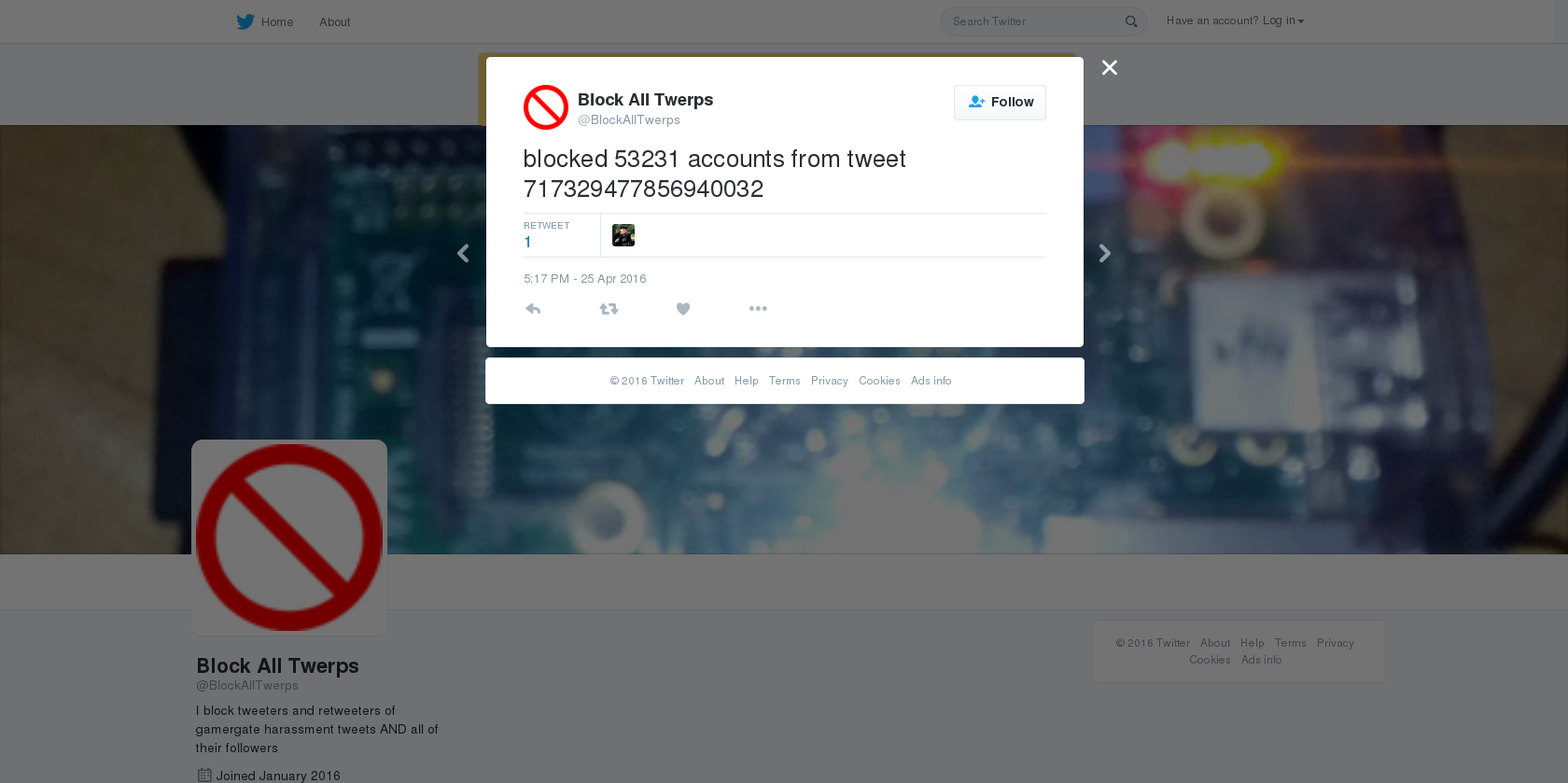

@BlockAllTwerps

Twitter is awash with rubbish. Aside from annoying or uninteresting content, there is the intentionally bad: tweets that are posted when one community is thrown together with another community with conflicting goals. For example, fascists take exception to feminists and a pile-on ensues. This dynamic is exacerbated by the fact that Twitter is a largely unmoderated platform.

Although blocking tools can prevent targets from seeing harassment, abusive tweets function as a form of performativity which attackers use to impress each other and to shore up their group identity. This rubbish is therefore meaningful within a social milieu.

Meanwhile, those under attack have taken to using block lists as a form of shared data within communities, as users turn to collaborative blocking as a means to respond to lack of moderation.

I explore this creation of blocking tools as a form of gendered labour, given that women are disproportionally likely to suffer online abuse.

However, these shared tools have a darker side: in creating big-data style blocking lists, some community members can find themselves shut out if they are caught up in the filters.